Blueprint for Trustworthy AI: A Comprehensive Guide to RAG Evaluation

If you are building a Retrieval-Augmented Generation (RAG) system today, you are likely facing a terrifying reality: your AI is a black box that can lie with total confidence. Building a blueprint for trustworthy AI isn't just about choosing the right model; it's about creating a rigorous evaluation framework that catches hallucinations before they reach your users.

In the early days of generative AI, we relied on simple word-matching metrics. But as systems have evolved, so must our testing strategies. This guide will walk you through the industry standards for RAG evaluation, the critical RAG Triad, and why human expertise remains the ultimate safeguard in an automated world.

Table of Contents

- The Trust Gap in Generative AI

- The Evolution of AI Evaluation: Beyond BLEU and ROUGE

- The RAG Triad: Your Core Framework

- Industry Standards: The Shift to LLM-as-a-Judge

- Identifying and Mitigating AI Biases

- Why Human-in-the-Loop (HITL) is Non-Negotiable

- The Learner's Checklist for Trustworthy AI

The Trust Gap in Generative AI

The primary barrier to the widespread adoption of AI in the enterprise is not a lack of capability, but a lack of trust. When a chatbot provides a medical recommendation, a financial forecast, or a legal summary, the cost of an error is not just a "bad user experience"—it can be a significant legal and ethical liability.

A blueprint for trustworthy AI starts with the acknowledgment that Large Language Models (LLMs) are probabilistic, not deterministic. They don't "know" facts in the way a database does; they predict the next most likely token based on patterns in their training data. In a RAG architecture, we attempt to ground these predictions in real, verifiable data, but the connection between the retrieved data and the generated answer is often fragile.

To bridge this trust gap, we need more than just "vibes-based" testing or manual spot-checking. We need a systematic, repeatable, and auditable way to prove that our AI is behaving as intended. This is where modern evaluation frameworks come into play.

The Evolution of AI Evaluation: Beyond BLEU and ROUGE

Traditional metrics like BLEU (Bilingual Evaluation Understudy) and ROUGE (Recall-Oriented Understudy for Gisting Evaluation) were designed for machine translation and summarization. They work by counting how many words in the AI's response match a "gold standard" human answer.

Why Traditional Metrics Fail RAG

While useful for simple tasks, these metrics are fundamentally broken for modern RAG systems for several reasons:

- Semantic Blindness: An AI can produce a response that shares 90% of its words with a correct answer but changes a single "not" to a "yes," creating a dangerous hallucination. Traditional metrics would give this a high score because the word overlap is high.

- Vocabulary Rigidity: A model might provide a perfectly accurate answer using entirely different vocabulary or sentence structure than the reference answer. Traditional metrics would unfairly penalize this creativity.

- Lack of Contextual Awareness: These metrics don't account for the retrieved context. They only compare the output to a reference, ignoring whether the AI actually used the provided data or hallucinated from its internal weights.

The Shift to Semantic Evaluation

To build trustworthy AI, we have moved toward Semantic Evaluation. This approach focuses on the meaning and intent of the response rather than just the characters on the page. Semantic evaluation allows us to detect hallucinations that "look" correct but are factually wrong, and it provides a "reason" for the score, which is critical for debugging and continuous improvement.

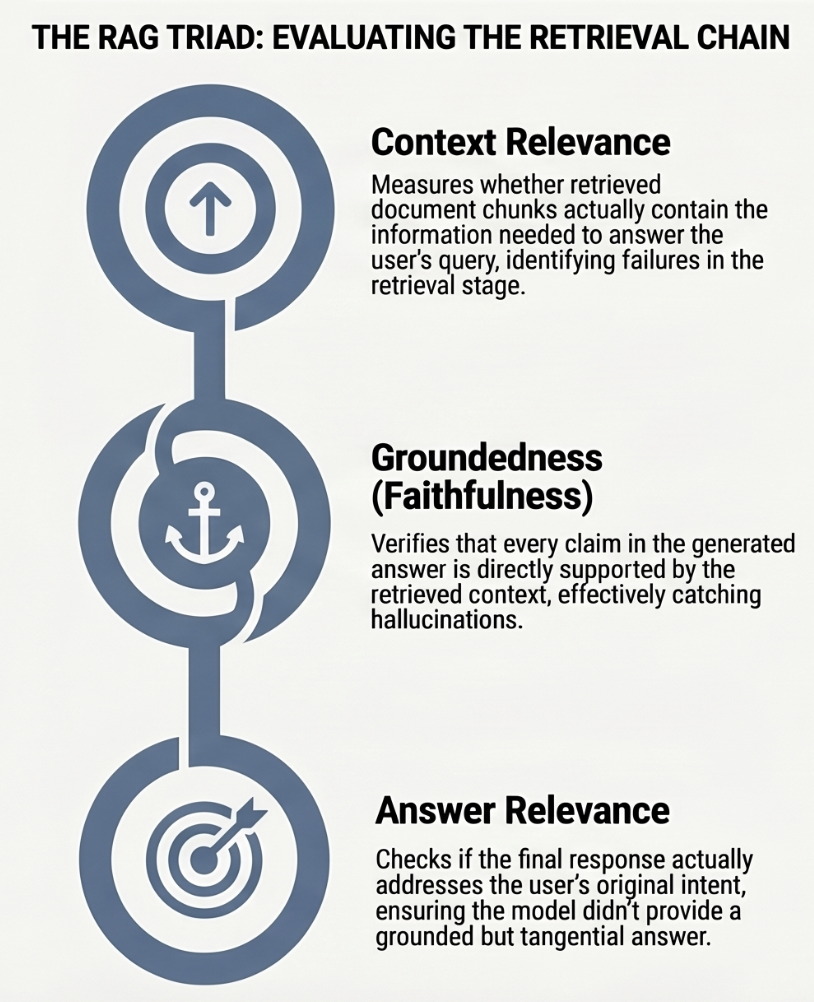

The RAG Triad: Your Core Framework

The RAG Triad is the industry-standard blueprint for evaluating RAG systems. It breaks down the complex interaction between the user's query, the retrieved data, and the final generated answer into three measurable pillars. By isolating these three relationships, you can identify exactly where your system is failing.

The RAG Triad is the industry-standard blueprint for evaluating RAG systems. It breaks down the complex interaction between the user's query, the retrieved data, and the final generated answer into three measurable pillars. By isolating these three relationships, you can identify exactly where your system is failing.

1. Context Relevance (Retrieval Quality)

Relationship: Query ↔ Context Before the AI even starts writing, it has to find the right information. Context Relevance measures whether your retrieval engine (vector database) actually found the documents needed to answer the question.

- Failure Mode: "Retrieval Failure." If the context is irrelevant, the AI is forced to rely on its own internal "memory," which often leads to making up facts.

- Example: A user asks about "2024 tax changes," but the system retrieves documents from 2022. The AI will likely give an outdated or hallucinated answer because it wasn't given the right facts to begin with.

- Industry Standard: This is often measured using metrics like Contextual Precision and Contextual Recall.

2. Groundedness / Faithfulness (Generation Quality)

Relationship: Context ↔ Answer Once the information is retrieved, the AI must stay within the bounds of that data. Groundedness verifies that every claim in the AI’s response is directly supported by the retrieved context.

- Failure Mode: "Fabrication." The AI might find the right context but then add "extra" facts from its internal training that weren't in your documents, or it might misinterpret a specific statistic.

- Example: The context says "Revenue grew by 5%," but the AI reports "Revenue grew by 15%." Even if 15% is true in the real world, if it wasn't in the context, the model is not being "faithful" to the source.

3. Answer Relevance (User Satisfaction)

Relationship: Query ↔ Answer Finally, did the AI actually answer the user? Answer Relevance checks if the response addresses the user’s original intent.

- Failure Mode: "Irrelevance." The AI might provide a factually grounded answer that is technically true based on the text but fails to answer what the user actually asked.

- Example: The user asks "How do I reset my password?", and the AI provides a 5-paragraph history of the company's security protocols without giving the actual reset steps.

Industry Standards: The Shift to LLM-as-a-Judge

The current gold standard for implementing the RAG Triad is a methodology called LLM-as-a-Judge. Instead of using rigid math formulas, you should use a highly capable "frontier" model to act as an automated auditor.

The Evaluation Workflow

- Collection: Gather the user query, the retrieved context, and the AI’s response.

- Structured Rubrics: The judge is given a specific set of rules (a rubric) for what constitutes a "good" answer. These rubrics should be written in plain English to ensure they align with human expertise.

- Chain-of-Thought (CoT) Reasoning: We ask the judge to perform a "Reasoning Audit," explaining its logic before giving a score. This allows us to "peek into the black box" to see if the judge is rewarding substance or just falling for "fluff."

- Structured Output: The judge returns a machine-readable format (like JSON) containing a score, a pass/fail status, and the reasoning.

Advanced Calibration: The Rogan-Gladen Estimator

In high-stakes scenarios, raw scores can be misleading. To find the truth, you should use the Rogan-Gladen Estimator. This statistical tool compares the judge against a "Gold Set" (human-labeled examples) to find its Sensitivity (how well it catches truths) and Specificity (how well it detects lies). This math allows us to report performance with statistically sound confidence intervals, providing a safety net against "false progress" where a model improves at tricking a judge rather than getting smarter.

Identifying and Mitigating AI Biases

While the shift to LLM-as-a-judge provides the semantic depth needed for RAG evaluation, it introduces a new challenge: Judge Bias. When you use an AI to judge another AI, you aren't just getting a neutral observer; you are inheriting the architectural quirks and training-data prejudices of the judge model itself.

Understanding why these biases happen is critical for any engineer building a blueprint for trustworthy AI. Most AI biases are not "errors" in the traditional sense, but rather side-effects of how the Transformer architecture processes information. For example, the "Attention Mechanism" that allows LLMs to understand context also makes them prone to over-weighting information based on its position or length.

To maintain the integrity of your evaluations, you must actively identify and mitigate these common biases:

1. Position Bias1 (The "First-is-Best" Problem)

This is a direct side-effect of how models process sequences. LLMs often show a statistically significant preference for the first (or sometimes last) response they see in a comparison, regardless of quality.

- Why it happens: The model's attention weights can become skewed toward the beginning of the prompt.

- Mitigation: Position Swapping. Run every evaluation twice, swapping the order of the candidate answers. If the judge changes its mind based on the order, you know you have a position bias.

2. Verbosity Bias2 (The "Yapping" Problem)

Judges tend to give higher scores to longer, more "confident-sounding" answers, even if they contain less actual information or more "fluff."

- Why it happens: Models often equate token volume with informational density during training.

- Mitigation: Strict Rubrics. Explicitly instruct the judge to penalize "wordiness" and reward conciseness. You can even include a "conciseness" metric in your custom rubrics.

3. Self-Enhancement Bias (The "Family" Problem)

A model might favor outputs that "sound" like its own writing style or come from the same model family (e.g., GPT-4o favoring GPT-3.5).

- Why it happens: Models recognize the specific linguistic patterns, formatting, and "vibe" of their own training data.

- Mitigation: Model Diversity. Always use a judge from a different model family than the one being tested (e.g., use Claude to judge a Llama-based model).

4. Authority Bias (The "Confidence" Problem)

Undue weight is often given to formal citations, professional formatting, or a confident tone, even if the underlying facts are wrong.

- Why it happens: The model is trained to associate professional formatting with high-quality, authoritative content.

- Mitigation: Masking. Neutralize or remove identity markers, formal citations, and specific formatting styles during the evaluation phase to force the judge to focus on the substance.

| Bias Name | What It Is | Mitigation Strategy |

|---|---|---|

| Position Bias | Favoring the first or last response in a list. | Swap the order of answers and run the evaluation twice. |

| Verbosity Bias | Favoring longer, "fluffier" responses over concise ones. | Explicitly instruct the judge to penalize "wordiness." |

| Self-Enhancement | Favoring outputs that sound like the judge's own model family. | Use a judge from a different model family. |

| Authority Bias | Undue weight given to formal citations or a confident tone. | Neutralize or remove identity markers during evaluation. |

Why Human-in-the-Loop (HITL) is Non-Negotiable

Automated evaluation is powerful, but it is not a replacement for human judgment. Human-in-the-loop (HITL) is the final, critical layer of any trustworthy AI system. AI judges are excellent at scaling your testing, but they lack the "common sense" and deep domain expertise that humans possess.

The Role of the Human Expert

- Calibration: Humans must periodically review a subset of the AI judge's scores to ensure the "Judge" hasn't drifted or become too lenient.

- Edge Case Discovery: AI judges are only as good as their prompts. Humans are better at spotting "unknown unknowns"—new types of failures that the automated system wasn't programmed to look for.

- Proprietary Nuance: As discussed in our guide on handling proprietary jargon, general-purpose models often miss the subtle nuances of specialized industries like law or medicine.

Implementing HITL Effectively

To implement HITL without slowing down your development cycle:

- Random Sampling: Audit 5-10% of all automated evaluations.

- Disagreement Analysis: Focus human review on cases where the AI judge was "unsure" (e.g., scores between 0.4 and 0.6).

- Feedback Loops: Use human corrections to update your few-shot examples and rubrics. This turns your evaluation system into a "flywheel" that gets smarter over time.

The Learner's Checklist for Trustworthy AI

If you are just starting your journey into RAG evaluation, use this checklist to ensure your system is built on a solid foundation:

- Define Your Golden Dataset: Create a set of 50-100 "perfect" query-context-answer triplets that represent your system's ideal behavior.

- Implement the RAG Triad: Don't just test the final answer. Measure Context Relevance, Groundedness, and Answer Relevance separately.

- Use Chain-of-Thought: Always ask your AI judge to provide a

reasonfor its score. - Set Clear Thresholds: Decide what a "passing" score looks like for your domain (e.g., 0.7 for creative apps, 0.95 for medical apps).

- Audit the Judge: Have a Subject Matter Expert (SME) review 10% of all automated evaluations.

- Monitor for Bias: Regularly rotate your judge models to ensure you aren't falling victim to self-enhancement or position bias.

- Neutralize the Attention Mechanism: Rotate answer positions to fix Position Bias.

- Calibrate for High Stakes: Use the Rogan-Gladen formula to ensure your progress is real, not an artifact.

- Document Your Rubrics: Ensure your evaluation criteria are written in plain English and shared across the team.

- Iterate on Prompts: Treat your evaluation prompts as code. Version them and test them just like your application code.

Conclusion: Your Path to Production

Building a blueprint for trustworthy AI is a continuous process, not a one-time setup. By moving beyond simple word-matching and embracing the RAG Triad and LLM-as-a-judge frameworks, you can build AI systems that aren't just powerful, but provably accurate.

Ready to start your evaluation journey? Learn how Evaliphy simplifies RAG testing or explore our guide on tuning custom prompts to make your AI judge a true domain expert.

For a deeper understanding of the underlying RAG architecture, we highly recommend exploring LlamaIndex's guide on understanding RAG.